Get the job you really want.

Maximum of 25 job preferences reached.

Top Data Science Jobs in NYC, NY

Fintech • HR Tech

As a Staff Data Scientist at Gusto, you will drive data-informed decision-making, lead projects, collaborate with teams, and mentor others in statistical analysis and experimentation.

Top Skills:

Ai ToolsPythonSQLStatistical Methods

eCommerce • Mobile • Retail

The role involves analyzing fraud and risk data, defining KPIs, collaborating on anti-fraud strategies, and creating tools and dashboards for data accessibility at Whatnot.

Top Skills:

BigQueryDbtPythonRRedshiftSnowflakeSparkSQL

eCommerce • Information Technology • Sharing Economy • Software

The Staff Data Scientist will drive business strategy through predictive insights, collaborate across teams, and develop solutions to enhance products and reduce losses.

Top Skills:

DbtGitPythonSQL

Consumer Web • Healthtech • Professional Services • Social Impact • Software

As a Staff Data Scientist, you will lead product analytics, define measurement strategies, drive cross-team initiatives, and mentor junior data scientists to enhance mental healthcare outcomes.

Top Skills:

PythonRSQL

Consumer Web • Healthtech • Professional Services • Social Impact • Software

As a Staff Data Scientist for Marketing Analytics, you will lead marketing performance analysis, develop measurement frameworks, and influence strategic decisions to drive patient growth.

Top Skills:

PythonRSQL

Big Data • Healthtech • HR Tech • Machine Learning • Software • Telehealth • Big Data Analytics

Contribute to product and growth by designing experiments, building predictive models and data pipelines, measuring product engagement, and presenting results to leadership. Collaborate cross-functionally to define metrics, deploy ML solutions, and improve data science practices.

Top Skills:

A/B TestingAIMachine LearningPythonRSQLStatistical Modeling

Fintech • Machine Learning • Payments • Software • Financial Services

As a Senior Associate Data Scientist, you'll leverage advanced machine learning techniques on cash flow data to forecast creditworthiness, collaborating with cross-functional teams and utilizing technologies like Python and AWS.

Top Skills:

AWSCondaH2OPythonSpark

Fintech • Mobile • Software • Financial Services

The Data Scientist will develop and implement machine learning models for credit risk and operational areas, collaborate across teams, and enhance data-driven decision-making processes.

Top Skills:

AWSGCPHiveKeras)LightgbmMachine Learning Libraries (SklearnNoSQLPythonPyTorchSagemakerSnowflakeSQLTensorFlowXgboost

Fintech • Mobile • Software • Financial Services

The Senior Staff Data Scientist leads data-driven insights for product development and strategy in the investment sector, collaborating with cross-functional teams and optimizing portfolio performance.

Top Skills:

PythonSQLTableau

Fintech • Machine Learning • Payments • Software • Financial Services

As a Senior Manager, Data Scientist - Applied AI, you'll lead a team to develop AI-powered financial products, utilizing machine learning and data analysis to enhance customer interactions with Capital One.

Top Skills:

AWSHugging FaceLangchainLightningPythonPyTorchRScalaVectordbs

Reposted 9 Days AgoSaved

Fintech • Machine Learning • Payments • Software • Financial Services

Manage data science initiatives to develop machine learning models and collaborate with cross-functional teams for data-driven solutions, focusing on customer-centric innovation.

Top Skills:

AWSCondaH2OMachine LearningPythonSpark

Fintech • Mobile • Software • Financial Services

As a Senior Staff Data Scientist, you'll leverage data analysis and ML models to support SoFi's Home Loans growth. This includes high-level technical leadership, mentoring, stakeholder communication, and building data pipelines.

Top Skills:

AirflowDbtLookerPythonSagemakerSnowflakeSQLTableau

New

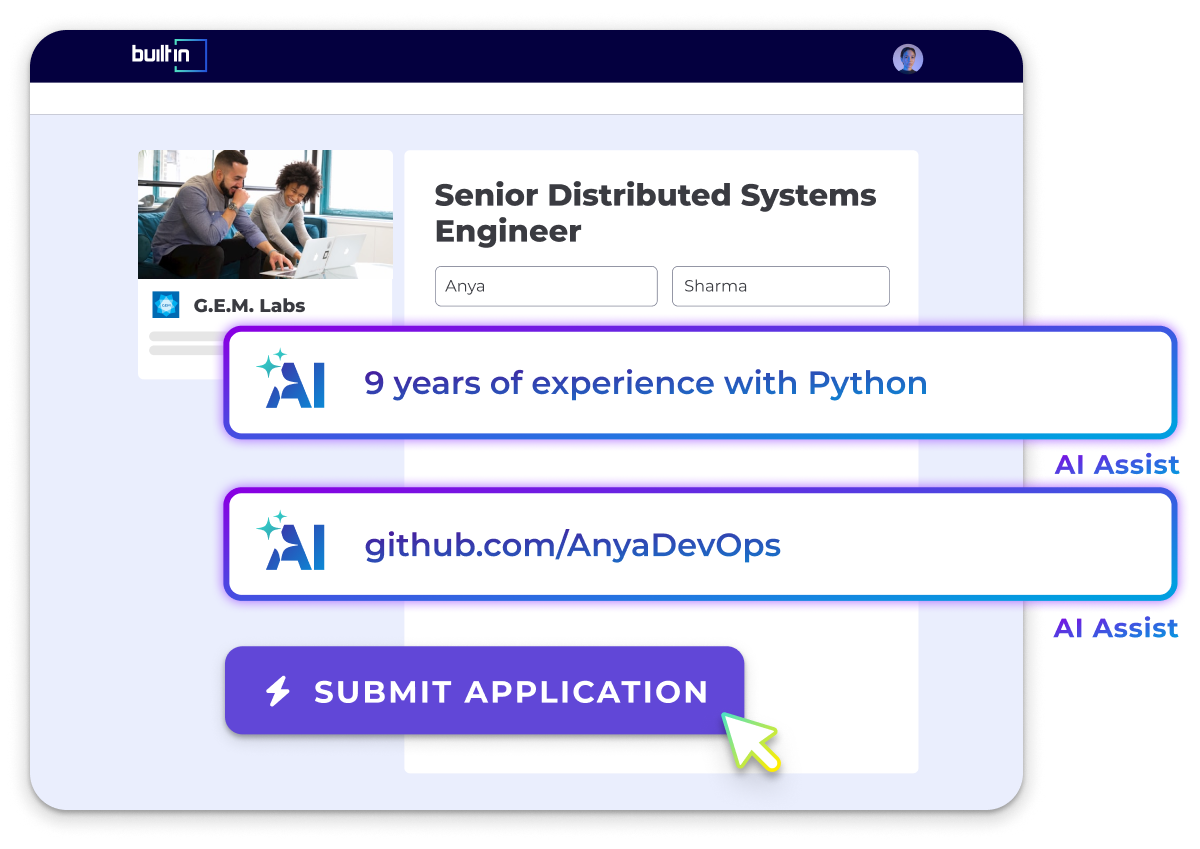

Cut your apply time in half.

Use ourAI Assistantto automatically fill your job applications.

Use For Free

Artificial Intelligence • Cloud • Consumer Web • Productivity • Software • App development • Data Privacy

The Data Scientist will analyze user behavior, create segmentation strategies, design experiments, and track business metrics to drive growth in a SaaS company.

Top Skills:

HadoopPythonRSQL

Insurance • Legal Tech • Social Impact

As a Data Scientist, you will leverage data to improve client experiences by designing metrics, running experiments, and creating data models. You'll support cross-functional teams in decision-making while crafting the company's data science culture.

Top Skills:

PythonRSQL

Marketing Tech • Mobile • Software

Join Braze as a Field Data Scientist to collaborate with customers on data integration, build APIs and ML models, and drive customer success.

Top Skills:

AirflowCatboostGCPKerasKubernetesPandasPythonScikit-LearnSQLTensorFlowTerraformXgboost

Fintech • Machine Learning • Payments • Software • Financial Services

The Data Scientist collaborates with teams to develop AI solutions using large language and visual models for data-driven decision-making in customer services.

Top Skills:

AWSHugging FaceLanggraphLlamaindexPyTorchSQLWeights And Biases Weave

Fintech • Machine Learning • Payments • Software • Financial Services

As a Principal Associate Data Scientist, you'll build machine learning models, collaborate with cross-functional teams, and drive data insights to improve business decisions.

Top Skills:

AWSCondaH2OPythonSparkSQL

Artificial Intelligence • Big Data • Healthtech • Machine Learning • Analytics • Biotech • Generative AI

The Data Scientist II will lead RWE studies, derive insights from real-world clinical data, and contribute to methodologies using advanced statistical techniques and AI tools, collaborating closely with pharmaceutical partners.

Top Skills:

AWSBigQueryGoogle Cloud PlatformRSQL

Fintech • Machine Learning • Payments • Software • Financial Services

As a Principal Associate, Data Scientist, you will lead fraud detection strategies using machine learning and collaborate with cross-functional teams to enhance fraud models and deliver innovative solutions.

Top Skills:

AWSCondaH2OPythonSparkSQL

Big Data • Healthtech • HR Tech • Machine Learning • Software • Telehealth • Big Data Analytics

We seek a Staff Data Scientist to lead projects on provider performance analysis, build predictive models, and mentor team members, impacting healthcare quality and costs.

Top Skills:

ArgoAWSDbtJupyter NotebooksPythonSnowflakeSQLTerraform

Fintech • Machine Learning • Payments • Software • Financial Services

As a Principal Associate Data Scientist, you will develop credit underwriting models using machine learning and statistical methodologies, collaborate with cross-functional teams, and contribute to innovative modeling practices.

Top Skills:

AWSCondaPythonSparkSQL

Artificial Intelligence • Cloud • Fintech • Information Technology • Insurance • Financial Services • Big Data Analytics

The Senior Associate - Data Scientist will develop AI and machine learning solutions, focusing on model lifecycle management and delivering AI initiatives in collaboration with business teams.

Top Skills:

AWSBedrockDatabricksPythonSagemakerSnowflakeSQLStreamlit

Fintech • Information Technology • Insurance • Financial Services • Big Data Analytics

The Data Scientist will design, build, and deploy machine learning solutions for automating claims adjudication in pet insurance, collaborating across teams, ensuring production readiness and ongoing optimization.

Top Skills:

AWSAzureGCPPower BIPythonPyTorchScikit-LearnSpacySQLTensorFlowTransformers

Artificial Intelligence • Professional Services • Business Intelligence • Consulting • Cybersecurity • Generative AI

As a Senior Manager in AI Data Science, you'll lead innovative solutions, manage project execution, mentor teams, and engage with clients to drive strategic initiatives.

Top Skills:

AIData ScienceDataopsDevOpsMlSoftware Engineering

Blockchain • Fintech • Payments • Financial Services • Cryptocurrency • Web3 • Infrastructure as a Service (IaaS)

As a Senior Data Scientist in Fraud Risk, you'll develop metrics, real-time monitoring systems, and implement predictive machine learning models to mitigate fraud while balancing customer experience.

Top Skills:

Ml ModelsPythonSQL

Popular NYC Job Searches

Tech Jobs & Startup Jobs in NYC

Remote Jobs in NYC

Hybrid Jobs in NYC

.NET Developer Jobs in NYC

Account Executive (AE) Jobs in NYC

Account Manager (AM) Jobs in NYC

Accounting Jobs in NYC

Accounting Manager Jobs in NYC

Administrative Assistant Jobs in NYC

AI Jobs in NYC

AI Engineer Jobs in NYC

Analysis Reporting Jobs in NYC

Analytics Jobs in NYC

Android Developer Jobs in NYC

Art Director Jobs in NYC

AWS Jobs in NYC

Business Analyst Jobs in NYC

Business Development Jobs in NYC

Business Intelligence Jobs in NYC

C# Jobs in NYC

C++ Jobs in NYC

Chief of Staff Jobs in NYC

Communications Jobs in NYC

Compliance Jobs in NYC

Content Jobs in NYC

Controller Jobs in NYC

Copywriter Jobs in NYC

Creative Director Jobs in NYC

Customer Success Jobs in NYC

Cyber Security Jobs in NYC

Data & Analytics Jobs in NYC

Data Analyst Jobs in NYC

Data Engineer Jobs in NYC

Data Management Jobs in NYC

Data Science Jobs in NYC

DevOps Jobs in NYC

Director of Operations Jobs in NYC

Editor Jobs in NYC

Electrical Engineering Jobs in NYC

Engineering Jobs in NYC

Engineering Manager Jobs in NYC

Executive Assistant Jobs in NYC

Finance Jobs in NYC

Finance Manager Jobs in NYC

Financial Analyst Jobs in NYC

Front End Developer Jobs in NYC

Golang Jobs in NYC

Graphic Design Jobs in NYC

Hardware Engineer Jobs in NYC

HR Jobs in NYC

Internships in NYC

iOS Developer Jobs in NYC

IT Jobs in NYC

Java Developer Jobs in NYC

Javascript Jobs in NYC

Legal Jobs in NYC

Linux Jobs in NYC

Machine Learning Jobs in NYC

Marketing Coordinator Jobs in NYC

Marketing Internships NYC

Marketing Jobs in NYC

Marketing Manager Jobs in NYC

Mechanical Engineering Jobs in NYC

Network Engineer Jobs in NYC

Office Manager Jobs in NYC

Operations Jobs in NYC

Operations Manager Jobs in NYC

Paralegal Jobs in NYC

PHP Developer Jobs in NYC

Product Designer Jobs in NYC

Product Development Jobs in NYC

Product Manager Jobs in NYC

Product Marketing Jobs in NYC

Program Director Jobs in NYC

Project Manager Jobs in NYC

Python Jobs in NYC

QA Jobs in NYC

Recruiter Jobs in NYC

Research Jobs in NYC

Ruby Jobs in NYC

Sales Associate Jobs in NYC

Sales Development Representative Jobs in NYC

Sales Engineer Jobs in NYC

Sales Jobs in NYC

Sales Leadership Jobs in NYC

Sales Manager Jobs in NYC

Sales Operations Jobs in NYC

Sales Rep Jobs in NYC

Salesforce Developer Jobs in NYC

Scala Jobs in NYC

SEO Jobs in NYC

Social Media Jobs in NYC

Social Media Manager Jobs in NYC

Software Engineer Jobs in NYC

Staff Accountant Jobs in NYC

Strategy Jobs in NYC

Tech Support Jobs in NYC

UX Designer Jobs in NYC

Writing Jobs in NYC

All Filters

Total selected ()

No Results

No Results

.png)

.png)

.jpg)